LLM Applications Development for Business.

Large Language Models aren’t just chatbots, they’re a new way to build software that understands context, processes language, and reasons through problems. We help you harness that power for real business applications, not demos.

- OUR APPROACH BUILD INTELLIGENT APPLICATIONS POWERED BY LANGUAGE

- WHY IT MATTERSWhy LLM applications matter for your business

LLMs enable a new class of software experiences , conversational interfaces, intelligent assistants, document intelligence, and knowledge-driven workflows. When embedded correctly in your stack, they unlock faster operations, better user experiences, and entirely new ways to interact with data and systems you already own

Your competitors are already using LLMs to:

- Automate customer support with context-aware assistants, cutting first-response times significantly

- Process thousands of documents in minutes, contracts, invoices, reports, tasks that used to take weeks

- Turn natural language into database queries that non-technical teams can run themselves

- Generate personalized content at scale while maintaining brand voice consistency

- Extract actionable insights from unstructured data that was previously unusable

- WHAT WE DELIVERPRODUCTION-GRADE LLM-POWERED APPLICATIONS

We build applications where LLMs are embedded as core components, not experimental add-ons. Our focus is on robustness, relevance, and control, ensuring LLM behavior supports real workflows and measurable business outcomes, with guardrails that prevent the failure modes that have made headlines for other companies.

Not the frustrating rule-based chatbots of the past. Modern AI assistants understand context, remember conversation history across sessions, access your knowledge base in real time, and know when to escalate to a human, because the worst AI assistant is the one that pretends to help but can’t.

Retrieval-Augmented Generation (RAG) combines the reasoning power of LLMs with your proprietary data , your contracts, your policies, your knowledge base, your technical documentation. Users ask questions in natural language and get accurate answers grounded in your documents, with sources cited. No hallucinations on factual questions.

Extract structured information, summarize long content, compare documents side-by-side, and answer questions about your entire document corpus. Works with PDFs, contracts, reports, emails, spreadsheets, and scanned documents, at a speed and scale that manual review cannot match.

Generate, adapt, and personalize content at scale while maintaining your brand voice and tone guidelines. From marketing copy to technical documentation, product descriptions to personalized email sequences, with human review workflows built in, not optional.

- BUSINESS VALUELanguage intelligence that scales with your business

Faster Access to Knowledge

LLM applications let users retrieve insights from complex information instantly, turning hours of document searching into seconds of natural-language questions, and making institutional knowledge actually accessible.

Operational Efficiency

By automating language-heavy tasks, classification, summarization, extraction, triage, LLM apps free your teams to focus on higher-value work that actually requires human judgment.

Improved User Experience

Natural language interaction lowers adoption barriers dramatically, users who would have given up on a complex form or dashboard can now just ask a question and get what they need, whether they’re new employees, external customers, or occasional users.

- THE PROCESS From idea to production AI in 4 disciplined phases

We start by identifying high-value use cases where language intelligence delivers measurable impact, then we design interaction flows, define guardrails, and architect systems that balance creativity with control.

Our approach ensures LLM applications remain predictable, explainable, and aligned with business logic, even as they scale from 10 users to 10,000.

PHASE 01

- Understand use case and business goals

- Assess available data sources and quality

- Evaluate build versus buy versus customize options

- Prototype key interactions to test assumptions

OUTCOMES

Clear direction &

rough prototype

- Develop core AI functionality

- Integrate with your existing data source

- Build the user interface

- Implement guardrails and safety layers

- Testing with synthetic and real data

- Pilot with real users in a controlled environment

- Gather structured feedback on quality and latency

- Tune prompts and retrieval logic

- Optimize cost per interaction

- Harden security and access controls

PHASE 04

- Deploy to production with zero-downtime rollout

- Setup monitoring dashboards and alerting

- Train your team for ongoing operation

- Document architecture, runbooks and decision log

LLMs unlock a new way to design software. Let's build applications that understand, assist, and act at scale, in production, on your data.

- DECISION FRAMEWORKBuild versus Buy versus Customize

We build reliable, secure, and scalable LLM applications designed for adoption, trust, and long-term value, but before we build, we help you decide whether building is even the right answer. Here’s the framework we use.

USE OFF THE SHELF

- Standard use case with no unique requirements

- No proprietary data or context needed

- Budget is tight and time-to-value matters most

EXAMPLES

- ChatGPT for general queries

- Grammarly for writing assistance

- Claude or Gemini for internal productivity

Our role : We can advise on tool selection, but you don’t need us to build

CUSTOMIZE EXISTING

- You need your own data integrated and accessible

- You have specific workflows that off-the-shelf tools cannot handle

- You want full control over behavior without training from scratch

EXAMPLES

- RAG pipelines on your documents and knowledge base

- Fine-tuned open-source models (Llama, Mistral) on your domain

- API integrations with your existing systems

Our role : This is where we deliver the most value for most of our clients

BUILD CUSTOM

- Truly unique requirements with no market equivalent

- LLM capability is your core competitive advantage

- You need full control over training data and architecture

EXAMPLES

- Custom-trained models on proprietary domain data

- Novel architectures for specific performance requirements

- Edge deployment for on-device inference

Our role : Full development partnership with senior ML engineers

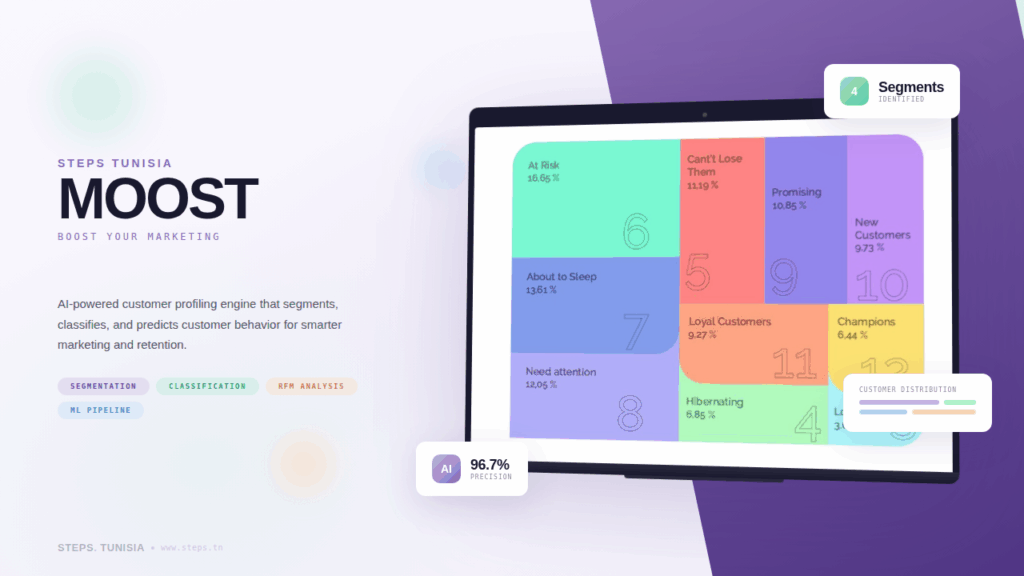

- CASE STUDIESOUR WORK SPEAKS FOR ITSELF

Explore our portfolio of bold and impactful projects, designed to inspire and deliver excellence.

- FAQFREQUENTLY

ASKED QUESTIONS

We provide end-to-end LLM solutions including fine-tuning open-source models (such as Llama, Mistral, and others) on your proprietary data, building RAG (Retrieval-Augmented Generation) pipelines, developing custom AI-powered chatbots, and creating internal knowledge assistants that respond to queries specific to your organization’s information.

Absolutely. We specialize in fine-tuning large language models on custom datasets so the model understands your business context. For example, we fine-tuned Llama 3 for Afreximbank to respond to inquiries specific to the bank’s internal information, combining conversational queries with database-level retrieval.

Our LLM services apply across multiple sectors including banking and finance, insurance, healthcare, retail, e-commerce, energy, manufacturing, transportation, and communications. Whether you need automated document processing, intelligent customer support, or internal knowledge management, we tailor the solution to your industry.

Data security is a priority. We can deploy LLM solutions on-premise or within your private cloud environment, ensuring your proprietary data never leaves your infrastructure. We also offer advisory services to help you define the right data governance strategy before any project begins.

RAG (Retrieval-Augmented Generation) combines the power of LLMs with your existing knowledge base. Instead of relying solely on what the model was trained on, RAG retrieves relevant documents from your data in real time and uses them to generate accurate, context-aware responses. This is ideal for organizations that need up-to-date, factual answers drawn from internal repositories.

Timelines vary depending on scope. A proof of concept can typically be delivered within 4 to 6 weeks. A full production-ready solution, including data preparation, fine-tuning, testing, and deployment, generally takes 2 to 4 months. We follow an Agile process with regular sprint reviews so you see progress throughout.

We work with a range of open-source LLMs including Llama 3, Mistral, Gemma, DeepSeek, and others. We evaluate which model best fits your use case based on performance, cost, language support, and deployment constraints, and we help you choose the most suitable tech stack for each project.

Yes. We provide post-deployment maintenance, model retraining as your data evolves, performance monitoring, and continuous optimization. Our CTO-as-a-Service and dedicated team models ensure you always have access to expert support.

- BLOG & NewsINSIGHTS, TIPS & news

About AI and digital world

Ready to turn language into a product capability?

Book a 60-minute LLM discovery call. We review your use case, identify whether off-the-shelf, customization or custom build is right for you, and provide a written POC plan within 5 business days — no obligation to continue.