Large Language Models aren’t just chatbots. They’re a new way to build software that understands context, processes language, and reasons through problems. We help you harness that power for real business applications.

- OUR APPROACHBUILD INTELLIGENT APPLICATIONS POWERED BY LANGUAGE

Large Language Models are not features — they are new product capabilities.

Our LLM Application Development services help organizations design, build, and deploy intelligent applications that understand language, reason over information, and interact naturally with users and systems. We focus on production-ready LLM solutions that are secure, scalable, and aligned with real business use cases.

- YOUR NEEDSWHY MACHINE LEARNING MATTERS ?

LLMs enable a new class of software experiences — conversational interfaces, intelligent assistants, document intelligence, and knowledge-driven workflows. When embedded correctly, they unlock faster operations, better user experiences, and new ways to interact with data and systems.

Your competitors are already using LLMs to:

Automate customer support and cut response times by 80%

Process thousands of documents in minutes instead of weeks

Turn natural language into database queries

Generate personalized content at scale

Extract insights from unstructured data

- WHAT WE DELIVERPRODUCTION-GRADE LLM-POWERED APPLICATIONS

We build applications where LLMs are embedded as core components, not experimental add-ons.

Our focus is on robustness, relevance, and control — ensuring LLM behavior supports real workflows and business outcomes.

Not the frustrating chatbots of the past. Modern AI assistants understand context, remember conversation history, access your knowledge base, and know when to escalate to humans.

Retrieval-Augmented Generation (RAG) combines the power of LLMs with your proprietary data. Ask questions in natural language, get accurate answers grounded in your documents.

Extract information, summarize content, compare documents, and answer questions about your document corpus. Works with PDFs, contracts, reports, emails, and more.

Generate, adapt, and personalize content while maintaining your brand voice. From marketing copy to technical documentation.

- BUSINESS VALUELANGUAGE INTELLIGENCE THAT SCALES

Faster Access to Knowledge

LLM applications enable users to retrieve insights from complex information instantly, reducing search and manual effort.

Operational Efficiency

By automating language-heavy tasks, LLM apps free teams to focus on higher-value work.

Improved User Experience

Natural language interaction lowers adoption barriers and makes complex systems easier to use.

- THE PROCESSFROM IDEA TO PRODUCTION AI

We start by identifying high-value use cases where language intelligence delivers measurable impact. From there, we design interaction flows, define guardrails, and architect systems that balance creativity with control.

Our approach ensures LLM applications remain predictable, explainable, and aligned with business logic — even as they scale.

PHASE 01

- Understand use case and goals

- Assess data sources

- Evaluate build vs buy

- Prototype key interactions

- Develop core AI functionality

- Integrate with your data

- Build UI

- Implement guardrails

- Test

- Pilot with real users

- Gather feedback

- Tune prompts

- Optimize cost

- Harden security

PHASE 04

- Deploy to production

- Setup monitoring

- Train your team

- Document

LLMs unlock a new way to design software. Let’s build applications that understand, assist, and act — at scale.

- YOUR NEEDSBUILD VS BUY VS CUSTOMIZE

We build reliable, secure, and scalable LLM applications designed for adoption, trust, and long-term value.

Fully aligned with your business and operational reality.

USE OFF THE SHELF

EXAMPLES

- Standard use case

- No proprietary data needed

Budget tight

- ChatGPT for general queries.

- Grammarly

Our role : We can advise, but you don’t need us

CUSTOMIZE EXISTING

EXAMPLES

- Need your data integrated

- Some custom needed

- RAG on your documents

- Fine-tuned models

- API integrations

Our role : Sweet spot for most clients

BUILD CUSTOM

EXAMPLES

- Unique reqs

- Competitive advantage

- Full control

- Custom-trained models

- Novel architectures

- Edge deploy

Our role : Full development partnership

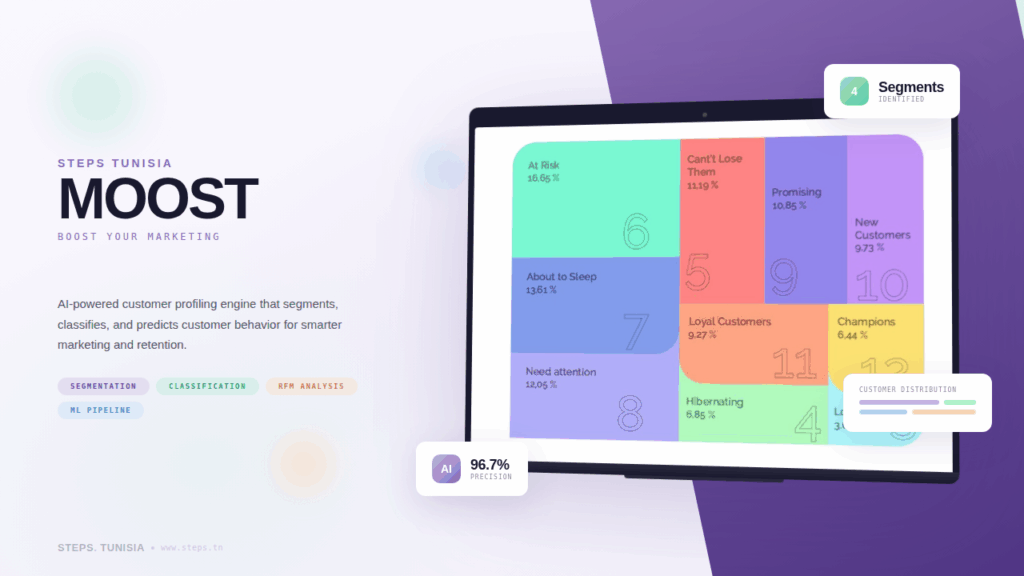

- CASE STUDIESOUR WORK SPEAKS FOR ITSELF

Explore our portfolio of bold and impactful projects, designed to inspire and deliver excellence.

- FAQFREQUENTLY

ASKED QUESTIONS

We provide end-to-end LLM solutions including fine-tuning open-source models (such as Llama, Mistral, and others) on your proprietary data, building RAG (Retrieval-Augmented Generation) pipelines, developing custom AI-powered chatbots, and creating internal knowledge assistants that respond to queries specific to your organization’s information.

Absolutely. We specialize in fine-tuning large language models on custom datasets so the model understands your business context. For example, we fine-tuned Llama 3 for Afreximbank to respond to inquiries specific to the bank’s internal information, combining conversational queries with database-level retrieval.

Our LLM services apply across multiple sectors including banking and finance, insurance, healthcare, retail, e-commerce, energy, manufacturing, transportation, and communications. Whether you need automated document processing, intelligent customer support, or internal knowledge management, we tailor the solution to your industry.

Data security is a priority. We can deploy LLM solutions on-premise or within your private cloud environment, ensuring your proprietary data never leaves your infrastructure. We also offer advisory services to help you define the right data governance strategy before any project begins.

RAG (Retrieval-Augmented Generation) combines the power of LLMs with your existing knowledge base. Instead of relying solely on what the model was trained on, RAG retrieves relevant documents from your data in real time and uses them to generate accurate, context-aware responses. This is ideal for organizations that need up-to-date, factual answers drawn from internal repositories.

Timelines vary depending on scope. A proof of concept can typically be delivered within 4 to 6 weeks. A full production-ready solution, including data preparation, fine-tuning, testing, and deployment, generally takes 2 to 4 months. We follow an Agile process with regular sprint reviews so you see progress throughout.

We work with a range of open-source LLMs including Llama 3, Mistral, Gemma, DeepSeek, and others. We evaluate which model best fits your use case based on performance, cost, language support, and deployment constraints, and we help you choose the most suitable tech stack for each project.

Yes. We provide post-deployment maintenance, model retraining as your data evolves, performance monitoring, and continuous optimization. Our CTO-as-a-Service and dedicated team models ensure you always have access to expert support.

- BLOG & NewsINSIGHTS, TIPS & news

About AI and digital world

READY TO GET CLARITY ON YOUR PROJECT.

Don’t wait for the future !

Build it with us now